Advanced .htaccess Guide for Better Security and Performance

The .htaccess file contains .htaccess codes that function as directory-level configuration directives used by Apache HTTP Server. If you are wondering what is .htaccess, it allows website owners to override specific server directives without modifying the main Apache configuration file. In shared hosting environments, this is often the only available method to control redirects, access policies, caching behavior, and URL structure.

To further clarify what is .htaccess, it operates within Apache’s request processing phases before the request is handed off to your CMS or backend logic. That means it can block, rewrite, or redirect traffic at the server layer. This makes it extremely powerful, but also easy to misuse if you do not understand execution order and scope.

Because Apache checks for .htaccess files on every request when overrides are enabled, heavy use can introduce overhead. On VPS or dedicated servers, permanent rules are typically migrated into the main configuration for better performance and maintainability.

For practical .htaccess snippets, see our previous article.

How Apache Processes .htaccess Files

Understanding execution flow prevents most configuration mistakes. To better understand what is .htaccess, it is important to note that Apache does not treat it as a global configuration file. It evaluates it dynamically on each request.

- Apache loads its main configuration first: The global configuration defines what overrides are allowed. .htaccess cannot bypass restrictions defined at the server level. It only works within permitted directive categories.

- Apache checks whether AllowOverride is enabled: If AllowOverride is set to None, Apache ignores .htaccess completely, and it can also be limited to specific directive categories such as FileInfo or AuthConfig. Many cases of rules not working are caused by restrictive override settings.

- Apache searches for .htaccess in the requested directory and its parent directories: It walks the directory tree upward toward the document root. This cascading lookup means rules in the root directory can affect the entire site.

- Directives cascade downward unless overridden: A .htaccess file in a subdirectory can redefine behavior from a parent directory. This allows granular control but also creates hidden inheritance that can complicate debugging.

- Rules are evaluated from top to bottom: Order determines execution. A broad redirect placed above a specific rule can prevent that specific rule from ever running.

- A syntax error immediately triggers a 500 Internal Server Error: Apache does not partially process the file. One invalid directive breaks the request entirely. Incremental testing is essential in production environments.

Syntax, Structure, And Module Dependencies

.htaccess directives, often referred to as .htaccess codes, depend entirely on Apache modules. If a module is not enabled, its directives will fail.

Commonly used modules include:

- mod_rewrite for URL rewriting and conditional routing.

This is the most powerful and complex module. It enables pattern matching using regular expressions and supports conditional logic through RewriteCond. - mod_headers for modifying HTTP response headers.

This module allows you to define security headers, caching directives, and cross-origin policies. - mod_expires for controlling cache expiration timing.

It defines how long browsers should cache specific file types. - mod_deflate for response compression.

It enables GZIP compression, reducing file size during transmission.

Even a default .htaccess configuration requires exact syntax. Directives are case-sensitive in many contexts, and incorrect flags or misplaced parameters will break the entire file. Logical grouping and commenting are strongly recommended in production environments to maintain clarity.

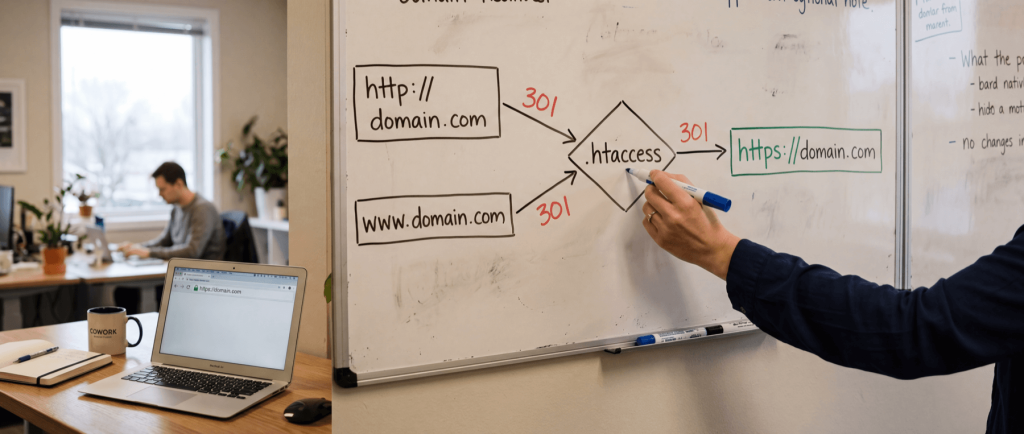

Redirects And Canonical Enforcement

Redirect .htaccess codes control how users and search engines reach your content. Poor redirect configuration leads to duplicate content, broken indexing, and unnecessary latency.

301 redirects indicate permanent changes.

A 301 status transfers ranking signals to the new URL over time. These are used for domain migrations, structural URL updates, and HTTP to HTTPS enforcement.

302 redirects indicate temporary moves.

Search engines do not treat these as permanent. They are suitable for short-term maintenance or testing scenarios.

Domain-level redirects manage migrations.

During domain changes, each old URL should map to its closest equivalent on the new domain. Redirecting everything to the homepage reduces SEO value and damages crawl integrity.

Canonical www or non-www enforcement prevents duplication.

Without enforcing one hostname format, the same page may be accessible through multiple URLs. This splits ranking authority and confuses indexing.

To force HTTPS with .htaccess, you configure HTTPS enforcement rules that secure traffic.

When you force HTTPS with .htaccess, redirecting HTTP requests to HTTPS ensures encrypted communication. It also aligns with modern browser expectations and search engine preferences.

Redirect chains should be avoided. A chain occurs when multiple redirects happen sequentially before reaching the final URL. Each additional hop increases response time and reduces crawl efficiency.

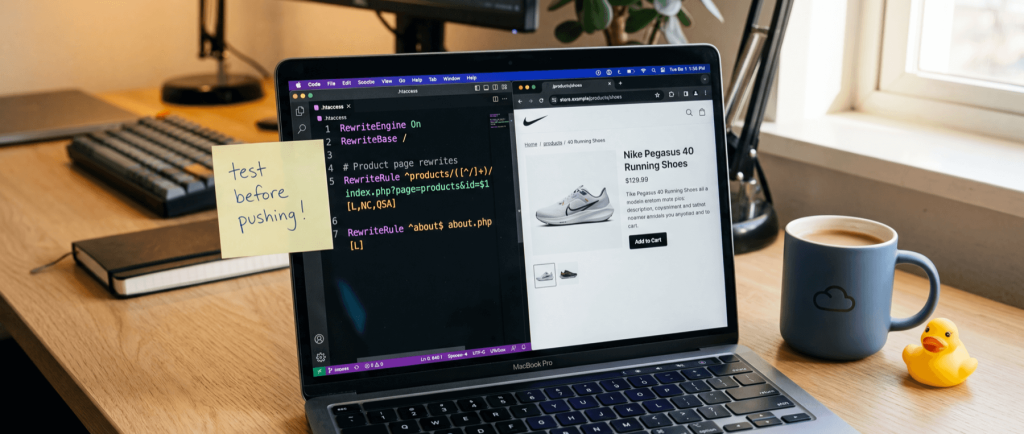

.Htaccess Rewrite With mod_rewrite

An .htaccess rewrite modifies how Apache internally processes a request without changing the visible URL in the browser. This enables clean URL structures and centralized routing.

- RewriteEngine On activates rewriting – Without this directive, rewrite rules will not execute. It must appear before any RewriteRule or RewriteCond statements.

- RewriteRule defines the matching pattern and action – It uses regular expressions to match URLs and can either rewrite internally or trigger external redirects.

- RewriteCond defines conditions that must be met – Conditions can inspect hostnames, query strings, request methods, and server variables. Multiple conditions can be combined for precise control.

- Flags control behavior – The L flag stops further rule processing. The R flag triggers redirects with specific status codes. The NC flag enables case-insensitive matching.

- Clean URL structures improve usability – Rewriting allows dynamic URLs such as page.php?id=10 to appear as /page/10. This improves readability while preserving backend logic.

Rewrite conflicts are common when combining HTTPS enforcement, canonical redirection, and extension removal. Careful rule ordering and staged testing are critical.

Security And Performance Controls

Security and performance adjustments in .htaccess operate at the web server layer, which means they are applied before a request reaches your application. This makes them efficient for filtering, restricting, and optimizing traffic early in the request lifecycle.

While .htaccess is not a replacement for proper server hardening or infrastructure-level optimization, it provides meaningful control in environments where deeper configuration access is restricted. In shared hosting setups especially, these directives are often the only available method to improve security posture and delivery speed.

Security Hardening

Security-focused rules in .htaccess help reduce exposure and limit attack vectors, and in Apache 2.4 they rely on the Require directive instead of the older Order, Allow, and Deny syntax. They do not replace firewalls or application security controls, but they significantly lower risk at the HTTP level.

- Disable directory indexing – When no index file exists in a directory, Apache may display a file listing. Disabling indexing prevents users from browsing file structures, which could expose uploads, backups, or internal assets.

- Restrict access by IP address – You can explicitly allow or deny traffic from specific IPs or IP ranges. This is useful for blocking repeated malicious activity or restricting access to internal environments such as staging servers.

- Protect sensitive files – Configuration files, environment files, and other non-public assets should never be accessible via the browser. Explicit deny rules add an extra layer of protection even if file permissions are misconfigured.

- Block access to hidden or system files – Files beginning with a dot often contain system or configuration data. Blocking direct access reduces accidental exposure.

- Add directory-level authentication – Basic HTTP authentication can protect administrative areas or internal tools. While not a full authentication system, it adds a barrier against unauthorized access without modifying application logic.

Performance Optimization

Performance optimization in .htaccess focuses on reducing payload size and minimizing unnecessary requests. Since these rules affect how responses are delivered, they can significantly improve perceived load speed when configured correctly.

- Enable compression for text-based assets – GZIP compression, when mod_deflate is enabled at the server level, reduces the size of HTML, CSS, and JavaScript files before they are transmitted. Smaller payloads result in faster transfer times and reduced bandwidth usage.

- Define browser caching rules – Static resources such as images, fonts, and scripts benefit from extended cache lifetimes. When properly configured, returning visitors load these resources from their local cache instead of requesting them again.

- Set appropriate Cache-Control headers – Cache-Control directives help distinguish between static and dynamic content. This prevents browsers from caching pages that should always be fresh while aggressively caching assets that rarely change.

- Reduce redirect chains – Each redirect introduces an additional HTTP request and response cycle. Consolidating redirects into a single step improves load time and reduces crawl inefficiency.

- Avoid unnecessary rewrite complexity – Overly complex rewrite logic increases processing time on every request. Streamlining rules improves both performance and maintainability.

In high-traffic environments, these optimizations are ideally handled at the server configuration or reverse proxy level. However, for many shared hosting deployments, .htaccess provides sufficient control to meaningfully improve speed and efficiency.

Situational Configurations And Edge Cases

Not every site requires these configurations, but they solve specific operational challenges.

Custom Error Documents

You can define branded 404, 403, and 500 pages instead of using default Apache responses. Custom error pages improve user experience and allow navigation back into the site.

Error pages should be lightweight and independent of complex rewrite rules to prevent recursive errors.

Maintenance Mode Handling

Maintenance mode can be implemented by redirecting all visitors to a temporary page while allowing specific IP addresses to bypass restrictions.

This approach is useful during migrations or deployments. It should always be removed immediately after maintenance is complete to avoid unintended downtime.

Response Headers And CORS

Using mod_headers, you can define security headers or CORS policies. This is necessary when integrating APIs or serving cross-origin resources.

Incorrect CORS configuration can either block legitimate access or unintentionally expose resources. Rules should be limited to specific paths when possible.

MIME Types And Content Handling

You can define custom MIME types for uncommon file formats. This ensures files are served correctly by browsers.

You can also force file downloads or explicitly define character encoding to prevent misinterpretation of content.

Troubleshooting And Debugging

Even a default .htaccess configuration can fail completely if directives are invalid.

Common causes include:

- Syntax errors or misplaced directives

- Disabled Apache modules

- Conflicting rewrite rules

- Incorrect AllowOverride settings

The first step in debugging is checking Apache error logs. Logs provide exact line references for failures. Changes should be applied incrementally to isolate problems quickly.

Never deploy large rule blocks without testing them in staging. Debugging complex rewrite chains on a live site increases downtime risk.

Best Practices For Production Stability

Keep your .htaccess codes minimal. The more logic it contains, the harder it becomes to maintain.

Comment each logical block clearly. Future updates will depend on understanding why a rule exists.

Avoid redundant redirects and overlapping rewrite patterns. Clean logic reduces risk of loops.

Test changes incrementally and verify behavior with tools that inspect HTTP status codes.

And if you control the server configuration directly, migrate permanent rules into Apache’s main configuration file for better performance and stability.

Conclusion

The .htaccess file is a powerful configuration layer that allows precise control over redirects, URL structure, security rules, and performance behavior on Apache servers. Because it operates before requests reach your application, it can enforce canonical URLs, block unwanted traffic, and optimize delivery at the server level.

Its flexibility requires careful handling. Clear structure, minimal rule sets, and incremental testing ensure stability. When used thoughtfully, .htaccess becomes a reliable tool for maintaining performance, security, and consistent URL behavior across your site.

Host Your Website With Reliable Performance From HostStage

If you need stable, fast hosting that supports proper redirects, caching, and overall site performance, we recommend our Business Shared Hosting plan at HostStage. It includes 15 GB NVMe SSD storage, 4 GB DDR4 ECC RAM, unlimited bandwidth, full cPanel access, and support for up to 50 MySQL databases, making it a strong fit for growing websites that need more than basic entry-level resources.

We also include free SSL certificates, daily backups, LiteSpeed-powered servers, and 24/7 support, so you can focus on your site while we handle the infrastructure. Choose HostStage Business Shared Hosting if you want reliable performance, solid resource allocation, and room to scale without moving to a VPS too early.