Vibe Coding: Accelerate AI-Assisted Code Generation

Vibe Coding transforms the way code is drafted, reviewed, and delivered. This guide explains how an iterative, AI-assisted code generation process shortens development cycles, improves turnaround times, and minimizes errors. It details the four phases of Vibe Coding, examines cost versus control, and outlines the necessary infrastructure for reliable AI-assisted workflows.

What is Vibe Coding? Let’s Define the Concept

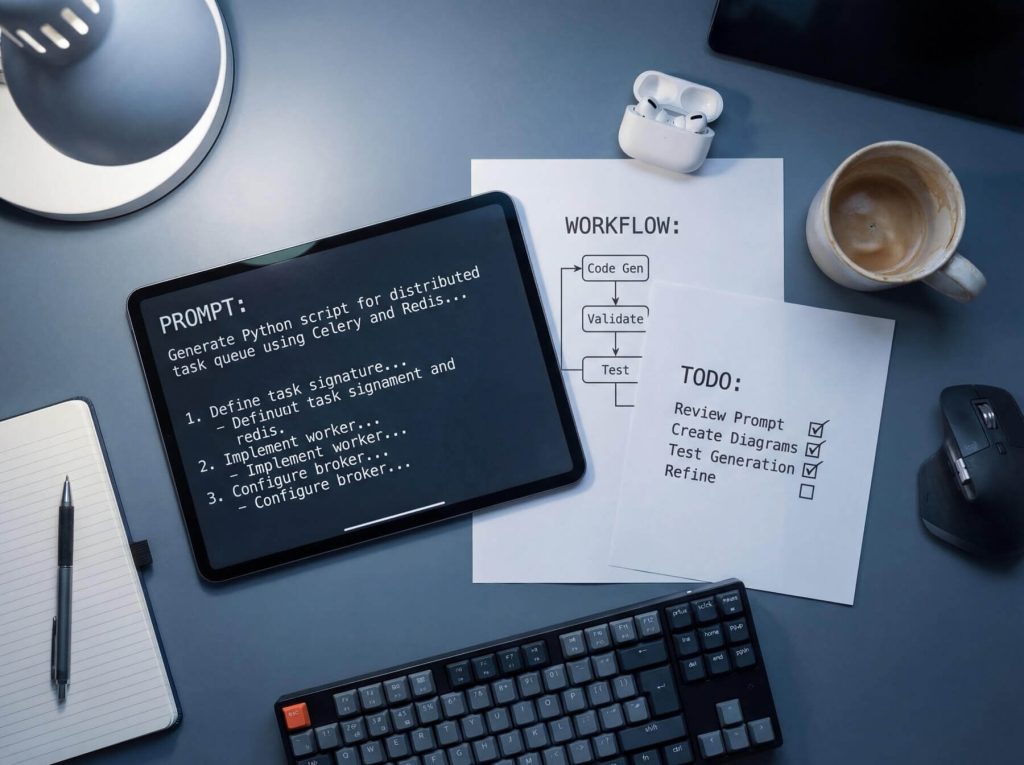

Vibe Coding uses an AI-assisted iterative process in which you describe the desired outcome, the model generates code, and you refine the results with rapid feedback until the output meets your team’s standards. By incorporating human review at every step, the time to turn intent into a working prototype is reduced. A prototype that once took six hours might now be ready in 90 minutes.

The workflow unfolds in four phases:

- Prompt Crafting: Provide the model with context—programming language, framework, constraints, and expected behavior.

- Generation: The model produces an initial draft, which may include handlers, tests, SQL queries, UI components, or integration code.

- Refinement: Developers address edge cases, tighten logic, and request clearer structure.

- Review: A developer verifies correctness, performance, and security before the code is deployed.

This process is similar to working with a junior engineer who produces quick drafts that require oversight to ensure quality.

Why Vibe Coding Matters for Your Business

Speed is critical when it reduces the gap between an idea, a prototype, and testable output. In traditional workflows, a developer might complete one to three prototype iterations daily; with AI assistance, that output can double for tasks such as building CRUD APIs, internal tools, or UI scaffolding.

Faster iterations enable earlier bug detection. For example, if a product manager revises a validation rule in the morning and the team tests it before lunch, fewer rounds of rework and QA are needed. Startups can validate logic within days, and enterprise teams can turn rough concepts into reviewable prototypes sooner, enabling feedback from architects and product stakeholders. For further examples on efficient workflows for small teams, see profitable AI side hustles.

Core Implementation Steps in Vibe Coding Workflows

Efficiency in Vibe Coding revolves around a three-step loop: providing clear intent, generating code with calibrated model settings, and applying human review alongside tests. Skipping one of these steps can turn a 30‑minute draft into hours of rework.

Step 1: Prompt Construction

A detailed prompt should do more than instruct the model to “build a login API.” It must specify the tech stack, input/output structures, constraints, and failure cases. For example:

“Create a Node.js Express POST /login endpoint using PostgreSQL, bcrypt, and JWT. Return 401 for invalid credentials, rate-limit to 5 requests per minute per IP, and include Jest tests for both success and failure cases.”

The greater the detail in the prompt, the better the initial accuracy and the fewer iterations required. Consider the table below:

| Prompt Complexity | Typical Contents | First-Pass Accuracy | Avg. Iteration Count |

|---|---|---|---|

| Low | Feature name only; no constraints | 35%–50% | 5–8 |

| Medium | Stack, endpoint details, basic rules | 60%–75% | 3–5 |

| High | Stack, schemas, edge cases, tests, style rules | 80%–92% | 1–3 |

Spending extra minutes crafting a detailed prompt can save significant rework time.

Step 2: Generation

For routine tasks like boilerplate code or CRUD operations, a mid-tier model is often sufficient. More complex tasks such as refactoring or multi-file changes may require a larger model. When configuring temperature-controlled settings, values between 0.1 and 0.3 tend to produce consistent outputs.

Step 3: Refinement

Treat the initial draft as a candidate rather than the final product. Developers must review naming conventions, error handling, and security practices before finalizing the code. Follow up with targeted directives such as “replace raw SQL with parameterized queries” or “add tests for null input.” If the AI output converges on verified code in one to three iterations, the workflow is efficient; if not, further adjustments might be necessary.

Paradigm Shift: From Prompt-Based Coding to VibeOps

When AI’s role expands from drafting individual functions to delivering full features, the process evolves into VibeOps. In this structured workflow, different roles are assigned: one agent drafts the code, another validates architecture and business logic, a QA agent handles testing, and a deployment agent prepares releases.

A typical VibeOps pipeline looks like this:

- Request/Issue

- Human developer defines scope, constraints, and acceptance criteria

- AI coding agent drafts code, tests, documentation, or refactors

- Human developer reviews logical and security considerations

- QA agent performs linting, unit tests, and regression checks

- Deployment agent builds artifacts and prepares the release

- Human approval for merge or rollback

By separating roles, rapid code generation becomes a more reliable process with clear handoffs and approvals.

When to Build vs. Buy: Cost and Control Trade-Offs

Once responsibilities are defined, the decision becomes whether to run the AI coding stack in-house or subscribe to a service. This decision involves considerations of monthly costs, the level of control over models and data, and the anticipated developer usage.

For self-hosting large language models (LLMs), consider GPU server costs (typically $600 to $2,500/month), storage, bandwidth, and operational overhead. SaaS models, on the other hand, often charge per developer—ranging from $20 to $100+ per month. For an example of cost efficiency for small teams, see AI automation business ideas.

Consider this comparison:

| Scenario | Self-Hosted Monthly Cost | SaaS Monthly Cost | Control Level | Integration Flexibility | Compliance Risk | Winner |

|---|---|---|---|---|---|---|

| 3–5 developers, light use | $800–$2,000 | $100–$500 | High | High | Low to medium | SaaS |

| 10–20 developers, medium use | $1,500–$4,000 | $800–$2,500 | High | High | Medium | Depends on compliance |

| 25+ developers, heavy daily use | $3,000–$8,000 | $3,000–$10,000+ | Very high | Very high | Lower if self-managed well | Self-hosted |

| Regulated industry, strict residency | $2,500–$8,000 | Often not viable | Very high | Very high | Lower with internal controls | Self-hosted |

| Fast pilot, no platform team | $800–$2,000 equivalent setup effort | $100–$1,000 | Medium | Medium | Medium | SaaS |

Infrastructure Selection for Vibe Coding Environments

Developer wait time is critical. The difference between a 400ms and a 2.5s response can significantly affect the user experience. The decision between a GPU and a CPU depends on the workload. CPUs might suffice for lightweight tasks, but GPUs are essential for larger models, longer context windows, and higher concurrency to achieve sub‑second token streaming.

Bare-metal servers provide predictable performance and isolation compared to virtual instances, which may face fluctuating latency. Managed Kubernetes with GPU node pools is another method to sustain user-facing metrics within acceptable limits. In addition, for developers needing a stable environment to host their AI development workflows, HostStage Managed VPS with cPanel offers plans starting at $37.45 per month in strategic locations such as Atlanta, Los Angeles, Amsterdam, and Lagos.

Consider the following table:

| Workload Profile | Model Range | Concurrency | Best Fit | Expected Response | Cost |

|---|---|---|---|---|---|

| Small team, light tasks | 3B–7B | 1–3 users | CPU virtual instance | 800ms–2s | Lowest |

| Mid-size team, interactive | 7B–13B | 3–10 users | GPU virtual instance | 300ms–900ms | Medium |

| Large team, heavy context | 13B+ | 10–25 users | GPU bare-metal | Consistent sub‑second | Higher |

For interactive workloads, low latency is essential. If prompt responses exceed one second during peak hours, it may be time to scale.

Scaling & Maintenance: Managing AI Dev Workloads

When prompt response times rise above 1.5 seconds during peak hours, developers might start batching requests instead of engaging in real time. To sustain productivity, set clear service level agreements (SLAs) such as ensuring a 95th percentile time-to-first-token under 1200ms, keeping queue waits below 300ms, and maintaining availability above 99.9% during business hours.

Consider these triggers:

| Trigger | Threshold | Action | Why It Matters |

|---|---|---|---|

| Queue wait time | >300ms for 2 minutes | Add one GPU node | Maintains interactive feel |

| Queue depth | >8 jobs per active GPU | Add one node | Prevents backlog |

| p95 first-token latency | >1200ms for 5 minutes | Add capacity/shift traffic | Keeps workflows usable |

| GPU utilization | >85% sustained | Add one node | Prevents saturation |

| Error rate | >2% for 5 minutes | Drain and reroute | Preserves reliability |

Governance & Code Review Controls in Vibe Coding

Unchecked output can introduce risks. AI-generated code might appear correct while missing key security practices or internal standards. Enforce branch protection by requiring pull requests, blocking direct pushes to the main branch, and mandating at least one human review for minor changes and two for sensitive areas.

Document what the AI generated, what was modified manually, and which files require comprehensive review using pull request templates. Integrate automated static (SAST) and dynamic (DAST) scans before merging to catch vulnerabilities early. For example, a GitHub Actions workflow might look like this:

name: pr-security-checks

on: [pull_request]

jobs:

sast:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run Semgrep

uses: semgrep/semgrep-action@v1

- name: Run dependency audit

run: npm audit --audit-level=high

Maintain audit trails that log prompt IDs, model versions, timestamps, and reviewer information so that every change is traceable.

Security & Compliance for AI-Generated Code Pipelines

Security measures must identify what the model modified, which data was processed, and what was returned. Harden your inference servers by restricting SSH access, disabling password logins, and enforcing strict outbound traffic rules. Use a web application firewall (WAF) to protect prompt APIs from oversized payloads and suspicious activity.

Data handling policies are vital. Minimize sensitive information in prompts, encrypt prompt logs and generated artifacts at rest, and enforce appropriate retention limits to comply with regulations such as GDPR and SOC 2. For additional guidance on securing your applications, consult the Advanced .htaccess Guide for Better Security and Performance.

Integrating CI/CD & DevOps in Vibe Coding Workflows

For AI-generated code, treat every build as a release artifact that must be traceable and easily reversible. Ephemeral test environments per pull request help catch runtime issues early. Include metadata such as commit SHAs, model versions, prompt IDs, and reviewer details so that rollbacks are straightforward.

Blue/green or canary release strategies can help limit impact if an AI-assisted feature encounters issues, ensuring that any rollout problem is manageable and rollbacks are simple.

Future Trends: The Next Phase of VibeOps

The evolution of AI-assisted coding lies in improved orchestration. Rather than channeling every task through a single model, future pipelines may distribute work across specialized models optimized for tasks like boilerplate generation, refactoring, or security reviews. Additionally, inference placement may include on-premise and edge options to further reduce latency.

As governance standards mature, emerging practices for prompt provenance and AI code licensing will be critical. Regular stress-testing of your infrastructure—by monitoring queue times, cold starts, token throughput, and latency—is recommended. Finally, standardize your CI/CD pipelines with strict permissions, comprehensive change logs, and human approvals for final commits.

FAQ

What is Vibe Coding?

Vibe Coding is a process where an AI drafts code based on detailed prompts and developers refine the output through rapid, iterative feedback.

How does Vibe Coding boost productivity?

By reducing the time from idea to working prototype, Vibe Coding enables faster iteration, earlier bug detection, and quicker code adjustments.

What factors should be considered when choosing between self-hosting and SaaS for AI coding tools?

Consider monthly costs, the level of control over models and data, developer usage, and compliance requirements. Self-hosting typically offers more control, whereas SaaS can be more cost-effective for smaller teams.

How do infrastructure choices affect AI-assisted coding workflows?

Choosing between GPUs and CPUs, as well as opting for bare-metal versus virtual instances, directly impacts response times, concurrency, and the overall user experience during interactive sessions.

What security measures are recommended for managing AI-generated code pipelines?

Enforce branch protections, maintain detailed audit trails, run automated SAST/DAST scans, and secure inference servers with strict access controls.